No.Originally Posted by Raelia

Neutral is 1.0. The original value does not change.

Push is anything greater than 1.0. The original value increases.

Pull is anything less than 1.0. The original value decreases.

(This is for multiplication; neutral would be 0 if it were addition, obviously.)

What you're defining is a greater push vs a lesser push; ie: the upper half of values of the secondary modifier vs the lower half of values of the secondary modifier. Or perhaps push and pull relative to the average secondary value, when you actually stated that it was relative to the primary value.

Again, no.Originally Posted by Raelia

My posts are detailed for a reason: If I make an error, or reach an invalid conclusion, people who notice or just generally disagree with me can actually go through step by step to see how I reached that conclusion, and point out where I missed a step, made a mistake, made an erronous assumption, or whatever. They can then point that out, and I can go back and fix things and improve my own understanding as well.

A lack of explicitly detailed math is far more an indication that the writer doesn't want to have their work scrutinized, and makes any assertions or conclusions far more suspicious because they are neither verifiable nor repeatable.

- 148/59/2.52but the margin is so small (148/59/2.52, SqRt that for for what percentile each randomizer needs to roll, then subtract from 1 again and square that for two randomizers, then take the reciprocal for odds) this would require 190,800 hits to occur, statistically.

148/59 is the absolute minimum pDif necessary to reach 148. 2.52 is the maximum pDif in your model. This division gives the ratio between that minimum and maximum, however it has no relation to the probability of that result occuring. That would only be the case if pDif could cover the full range of 0 to 2.52, which it clearly does not.

- square that for two randomizers

Incorrect. That only applies if the two randomizers are equivalent. In this case, they are not. You need a specific portion of the original randomizer combined with a specific portion of the secondary randomizer, and those portions cannot be assumed to be equal.

Your illustration with the 80-sided die and the 20-sided die was more correct. The probability of rolling 100 is the combined respective probabilities of rolling an 80 and a 20. However you can't simply take the probability of rolling an 80 and square it to get the overall probability; it's nonsensical, since the probability of rolling an 80 on the first die has nothing to do with the probability of rolling a 20 on the other die.

For this case, you need the probability of a primary pDif result that has -any- possibility of reaching 148 when combined with the secondary randomizer. The lowest primary pDif is clearly the value that, if you got exactly a 1.05 on the secondary roll, would give you a 148.

148 / 59 / 1.05 = 2.38902

Since it has to be equal to or greater than that value, and I'd prefer to work with no more than 3 decimal places, I'll use 2.390.

The maximum value that the primary pDif can be in your model is 2.40, so the range of potential primary pDif values that could possibly generate a 148 would be 2.400 - 2.390 = 0.01.

The full quantity of values that the primary pDif can encompass is +0.4 - (-0.5) = 0.9. Therefore the probability that the primary pDif can have any chance whatsoever of resulting in a final value of 148 is 0.01 / 0.90 = 1.111%. (Note: This uses the full spread rather than the slightly shortened spread I used in the original post; that is, it doesn't account for the lower pDif limit being of questionable validity.)

The probability of actually reaching 148 then depends on the secondary roll. This ranges from near 0 (getting exactly 1.05 on the multiplier; this may possibly be 2% if it's 1 chance out of 50, using 50/1024 or thereabouts, but will treat it as 0 for the combined probabilities) to whatever the chance is when combined with the maximum pDif.

At maximum primary pDif, the secondary modifier would need to be at least (148/59) / 2.4 = 1.0452. The percentage of the time that could occur would be (1.05 - 1.0452) / (1.05 - 1.00) = 9.6%.

The overall probabilty area of those combined values is a triangle, so the total probability is 1/2 A B, or (1.11% * 9.6%) / 2 = 0.05336%. In terms of frequency, invert that value for a chance of 1 in 1874, two orders of magnitude more common than your assertion.

With 1850 sample points in Masa's data, there's a 37.25% chance of a 148 not showing up if it were in fact possible, and likewise a 62.75% chance that it -would- show up if it were possible. You'd need about 5600 sample points to have a 95% chance of the value showing up if it were possible.

Originally Posted by Raelia

Using the same procedure as detailed above:

Primary pDif range that can generate a 91: 0 to (91.9999/59 - 1.55) = 0.00932 out of the full range of 0.85 = 1.096%

Maximum secondary multiplier given primary pDif of +0.0: 91.9999/59 / 1.55 = 1.00601 out of 1.05 = 12.03%

Overall probability: 1.096% * 12.03% / 2 = 0.0660%, or 1 in 1516.

70.5% chance of seeing such a result in 1850 samples.

Probability of a 92 showing up, given a -0.45 LIA:

Primary pDif range that can generate a 92:

1) The above 0.00932 (1.096%) chance of something between 91.45 and 91.9999 when combined with secondary modifiers between 1.00601 and 1.0169 at the low end and 1.0 to 1.01087 at the high end.

Secondary range at low end: 0.01089

Secondary range at high end: 0.01087

Overall, range of valid secondary values seems to remain consistant (as should be expected, really) for the full extent of the primary values. Will use 0.01088 out of 0.05 for 21.76%.

Total probability for when primary is 91.xxx (no triangle in this case): 1.096% * 21.76% = 0.2385%

2) (92/59 - 1.55) to (92.9999/59 - 1.55) = 0.01695 out of the full range of 0.85 = 1.994%, combined with a secondary modifier between 0 and 1.01087 at the low end, down to 0 at the high end.

Total probability for when primary is 92.xxx: 1.994% * 21.76% / 2 = 0.2169%

Overall probability: 0.2385% + 0.2169% = 0.4554%, or 1 in 220

99.98% chance of seeing such a result in 1850 samples. On average, expect 8.4 such results. Total actually seen: 10

Average if minimum value was exactly 92.0 would be 1 in 460, or about 3 expected over 1850 results. Given the significantly higher numbers seen, that would seem to support the idea of a minimum somewhat below 92.

The chances of a 91 occurring here is worked out the same as the chance of a 92 occuring in the above example.Originally Posted by Raelia

Min pDif: 1.525

Max secondary multiplier on top of min pDif results in a value above 91, so we can treat everything from min up to 91.0 as a square segment.

pDif for 91.0: 1.5424

Primary range (square): 1.5424 - 1.525 = 0.0174 out of 0.875 = 1.989%

Secondary range covering 1.0 spread: (91.9999/59 / 1.525) - (91.0/59 / 1.525) = 1.02251 to 1.01139 = 0.01112

0.01112 out of 0.05 = 22.24%

Chance (square range): 1.989% * 22.24% = 0.4424% (1 in 226)

Chance (triangle range):

Primary pDif from 91/59 to 91.9999/59 = 1.5593 - 1.5424 = 0.0169 out of 0.875 = 1.931%

Secondary range at 91.0: 91.9999/91 = 1.01099 out of 1.05 = 21.98%

Chance (triangle range): 1.931% * 21.98% / 2 = 0.4244%

Total probability: 0.4424% + 0.4244% = 0.8668% (1 in 115)

1.0e-5% chance (1 in 9.9 million) of -not- seeing it if it were possible, in 1850 samples.

~~~~~~~~~~~~~~~

So 0.45 is somewhat possible, as there's still a 30% chance that a 91 wouldn't show up, but 0.475 is just flat out not believable.

Given the probabilities for a 92 occurring, I'd say that there's a strong argument for -0.45 (at least as an approximation) for the minimum pDif at 2.0 cRatio. Whether that's a fixed value or the result of a different calculation formula is another matter.

- Navigation

+ Reply to Thread

Results 21 to 40 of 66

-

2012-01-09 15:31 #21Chram

- Join Date

- Sep 2007

- Posts

- 2,526

- BG Level

- 7

- FFXI Server

- Fenrir

-

2012-01-09 16:54 #22Custom Title

- Join Date

- Nov 2008

- Posts

- 1,066

- BG Level

- 6

- FFXI Server

- Diabolos

I fucking love it Moten. You're damned right about my erroneously using the full scaling of pDif instead of the active RAE+LIA range. I think that was why I was subtracting 148/59 from 2.52 instead of dividing at first with the intent to divide that result by the 0.90 RAE+LIA range, then lost that step somewhere in my mind before going back and 'correcting' to that division of 148/59/2.52.

So using 1/(4.765*(1 - √(1 - (2.52 - 148/59)/0.85))^2) instead, I get 1 in 4534 to see 148 damage with 59 base, or about 66% chance to not see it over 1850 hits. This is where this comes in:

The 4.765 comes from a randomizer balance correction of 17:1 (0.85/0.05) vs 9:9. (1/(1/9)^2)/(1/(17*1) giving 81/17

To equivalence and sorta prove this, d80 and d20 have that 1 in 1600 chance of rolling 100. What I'm doing is using two d50s (not two d80s as you surmise) which are 1 in 2500 to roll 100 and 'correcting' this by dividing 1.5625 to get 1600. So long as the ratio of the two randomizers remains the same (You can use 8000:2000 vs 5000:5000 and such) this is valid.

Yes, I make this shit up as I go along, and yes most of the time it works.

Anyway, here's the best part now that you've shown me that 0.45 LIA is valid: Take any of Masa's data and calculate Dmg*(cRatio+RAE-LIA)*1.025 and you get within a point of the average that was parsed. Working with this I find telltales that RAE-LIA should be only 0.05 above 1.66 (10/6?) cRatio and only 0.025 for his 1.95 cRatio and 2.0 data.

-

2012-01-09 19:43 #23Chram

- Join Date

- Sep 2007

- Posts

- 2,526

- BG Level

- 7

- FFXI Server

- Fenrir

Where does the 9:9 come from?

Where does the 9:9 come from? Originally Posted by Raelia

Originally Posted by Raelia

If I'm understanding you correctly, the ratio of the two randomizers does *not* remain the same. Originally Posted by Raelia

Originally Posted by Raelia

Suppose the primary pDif generated exactly 92.0 (so 92/59 = 1.55932 pDif). What range of the secondary pDif will retain a value of 92?

Max final pDif is 92.9999/59 = 1.57627. Secondary modifier can be between 1.0 and 1.57627/1.55932 = 1.01087, for a range of 0.01087.

* It can be shown that this can ultimately be simplified to x/N, where N is the starting value (eg: 92) and x is the final range value it covers (eg: 1 for the case of 92.0 to 92.9999). 1/92 = 0.01087

Suppose the primary pDif generated exactly 147.0 (so 147/59 = 2.49153 pDif). What range of the secondary pDif will retain a value of 147?

Max final pDif is 147.9999/59 = 2.50847. Secondary modifier can be between 1.0 and 2.50847/2.49153 = 1.00680, for a range of 0.00680.

* 1/147 = 0.00680

So the valid range on the secondary modifier for a fixed subset of final values (eg: 91.450 to 91.999) is 60% larger at the low end than at the high end. Since the range of the primary pDif is the same in both cases, the ratio between them is necessarily different at different ends of the equation.

Therefore the assumptions you seem to be making in order to simplify the formula do not appear to be valid. Overall I'd prefer it if you showed how you actually derived that formula.

I also want to go through how you got the 4.765 in the first place.

Let's look at the 80 and 20 dice again.

Chance of rolling an 80: 1/80

Chance of rolling a 20: 1/20

Chance of rolling 80+20: 1/80 * 1/20 = 1/1600

Chance of rolling 50+50 and pretending they were 80+20:

1/50 * 50/80 * 1/50 * 50/20

= 50/80 * 50/20 * (1/50)^2

= 2500/1600 * (1/50)^2

which eventually simplifies back to 1/1600, same as the original.

Your 'correction' value is 81/17. 81 comes from 9*9, which appears to be an arbitrary value. 17 comes from 0.85/0.05. However that doesn't make sense based on your own explanation and the above illustration.

Your basic ratio should be 1/primary * 1/secondary (ignoring that the ratios are not static), or 1/0.85 * 1/0.05 = 1.17647 * 20 = 23.5294

81/23.529 = 3.4425

Running that through your equation generates a chance of 1 in 1823.

Reworking mine to be less manual work:

Since we know how to calculate the range of the secondary modifier we're interested in (x/N), and we know the limits of the secondary modifier (S = 0.05), we can deduce that the percentage chance of the secondary modifier covering a fixed range amount to be (x/N)/S = 20*(x/N).

The probability of the primary pDif being within the range we're interested in is p/P, where P = 0.85 (or whatever the full spread is determined to be), and p is determined by what we're looking for (eg: 2.52 - the minimum pDif that can possibly generate 148).

Final probability is the product of those two, so: (p/P) * 20 * (x/N).

For a given fixed value of P (eg: 0.85), we can take: 20 * (p/(17/20)) * (x/N) = (400/17) * p * x / N

Coincidentally, 400/17 = 23.5294, the value we got above when working out 1/primary * 1/secondary.

Of course since x is dependant on p, you then need to do a summation (integral, really, but that's extra work I don't want to do right now) over the range of p.

In the case of the max value, x increases from ~0 to its max result over the range of p. The probability can thus be considered 0 at the minimum, and the full formula at the max, with the final value being the average of those two (ie: triangular summation).

Since it starts at 0, final would be (1/2) * (400/17) * p * max(x) / N = (200/17) * p * max(x) / N

Damage at max pDif would be 148.68 if using 2.4*1.05. max(x) is thus 0.68, while N is 148. p is 2.4 - (148/59)/1.05 = 0.01098

(200/17) * p * max(x) / N

= (200/17) * 0.01098 * 0.68 / 148

= 0.0005933

= 1 in 1685

66.6% chance of generating a 148 in 1850 hits.

Edit: bleh, stupid smilies. Is there a way to default those to off when writing posts?

-

2012-01-09 20:11 #24Custom Title

- Join Date

- Nov 2008

- Posts

- 1,066

- BG Level

- 6

- FFXI Server

- Diabolos

I honestly haven't the faintest idea. It's a mishmash of doing shit that works by hand and eye and then transpositing that method to an inline equation. I got it in pretty much the way I explained it, then realized that equal randomizers would produce a different result from inequal, so developed the correction factor. You wouldn't believe how often I ask myself that very same question.

Blah anyway... this is why I don't allow myself to go to Vegas. "I am not a Gambler, I am a Probability Enthusiast."

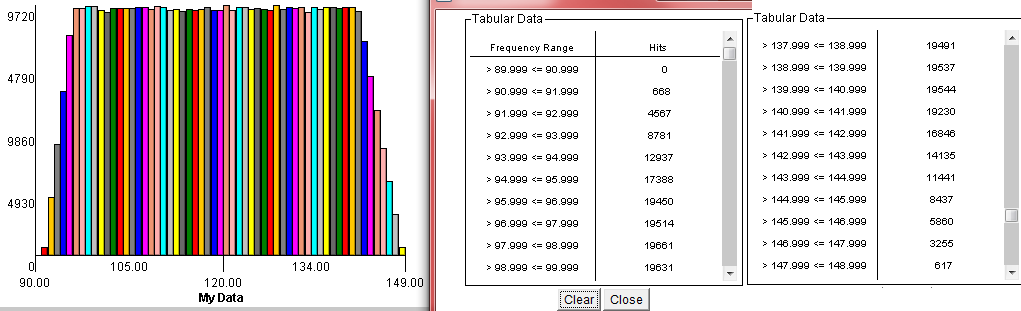

Got sick of trying to math it, so decided to simulate it. I've got a NICE chunk of generated data for you. Fifty thousand hits. Generated in Graph.exe with:

Base damage = 59

cRatio = 2.0

RAE = 0.4

LIA = 0.45

floor((59)*(2)*((1-.45/2)+rand*((.4+.45)/2))*(1+rand*(0.05)))

Generated a table of 50k hits to a .csv file, dumped that into a histogram generator I had to find as opposed to firing up matlab and making one myself...

148 damage: 30 times in 50,000, or 1 in 1666.

91 damage 22 times, or 1 in 2272.

Mean of 118.852

92 damage: 1 in 209

147 damage: 1 in 320

Keeping perfect with Masa's finding these in just a few hundered hits, but missing 91 and 148.

Screw it. Let's do a million hits :D and below that is 2-hander with 100 base damage to compare shapes:

It's easy to see how much finer the 2-hander data is at least. Hold something up to the screen and they have the same shape though.

-

2012-01-09 22:58 #25Chram

- Join Date

- Sep 2007

- Posts

- 2,526

- BG Level

- 7

- FFXI Server

- Fenrir

Similar to my predicted value of 1 in 1685. Seems to validate the formula I came up with.

Similar to my predicted value of 1 in 1685. Seems to validate the formula I came up with. Originally Posted by Raelia

Originally Posted by Raelia

The million-hit sample was slightly lower, 1 in 1620. Would make sense in that the formula I came up with assumed 0 probability at the cusp, when it should really be like 2%; raising the probability a tiny bit would drop the 1 in X value a small amount.

The shape of the graph tails also matches parsed data; the tail on the lower end is shorter than on the higher end (4 points on lead end plus 1 slightly below midrange, while there are 6 points on the trailing end plus 1 slightly below midrange). Does not show the imbalance seen in above vs below cRatio segments, though.

Will have to keep this in consideration when I go back to the general data review.

-

2012-01-10 03:57 #26

If we accept the 1~1.05 multiplier (which people do not seem to debate anymore), then the weirdest part of the pDIF equation is the plateau around 1. It is known to affect the minimum pDIF, but Masa couldn't test the maximum non-crit pDIF due to the low attack involved. I will try to do it over the next week using Prelatic Pole and Rafael's Rod on DNC/BLM against Greater Colibri.

If anyone on Lakshmi has a Rafael's Rod they'd like to loan me, that would really speed this up.

-

2012-01-10 08:44 #27Chram

- Join Date

- Sep 2007

- Posts

- 2,526

- BG Level

- 7

- FFXI Server

- Fenrir

Byrth: there's more to the plateau than a simple "you can't have a damage value below 1.0". The frequency of damage at exactly 1.0 increases as pDif goes down.

Here's a short little bit from my warp cudgel test. Base damage is 15+8 = 23. Attack is 337, and this is for the lvl 82 birds, so defense of 327, for a cRatio of 1.0306.

Excluding the damage values of 23 and 24, the average frequency is 18.2. 23 and 24 have frequencies more than 4 times that average.Code:Melee 17: 14 18: 26 19: 22 20: 16 21: 18 22: 25 ^ 23: 79 + 24: 81 25: 14 26: 21 27: 17 28: 17 29: 14 30: 14

You'll see that same general spike as cRatio goes up, and even after you reach the point where the minimum damage itself is always at 1.0 you'll still have a generally higher frequency at those points up until cRatio reaches about 1.5.

The only reasonable way I can explain it is that the minimum pDif is calculated like normal, however when it reaches the point of going below 1.0, the 'excess' values below 1.0 are changed to 1.0. Well, up to a point; there's also a minimum difference between cRatio and minimum actual damage, and eventually minimum damage must go below 1.0 pDif to maintain that spread. Even then, the pDif results that fall below the minimum allowed damage are still reset to 1.0.

-

2012-01-10 09:02 #28

I would apply it the opposite way, I think. You can get a plateau like that with a frequency spike at your desired value by adding the first randomizer to cRatio and then throwing the generated value into a piecewise equation. Also yeah, the frequency spike is very pronounced in Masa's data as well.

Spoiler: show

The reason not to do what I've suggested basically comes down to critical hits. The slope of the critical max (at least) is lower than we would expect from a model like this. We would expect the slopes to be essentially constant, because implementing anything else would be kind of a pain.

-

2012-01-10 10:13 #29

I'm sure this has been pointed out before, but Masa had 9 fSTR on the level 63 and 64 targets and 8 fSTR on the level 65 targets. That smooths the data out a lot. Look at the parses where he is critting over 3.0, check for 185 instead of 182, compare monster levels. (for the 1H samples in distribution study)

-

2012-01-10 10:23 #30Custom Title

- Join Date

- Nov 2008

- Posts

- 1,066

- BG Level

- 6

- FFXI Server

- Diabolos

Certainly strange but not inexplicable. The problem I have right now is whether it's a flooring at 1.0 before or after Secondary. If before then it's just the same step as capping crits at 3.0, but if after (like, 1.26-1.3 cRatio has dozens of 1.0 hits) it's a very strange extra step. This is when 100 base damage is handy because if it's before Secondary you'll see a whole bunch of values for 100 to 105 damage, but if after you'll see a slope to them and 1.0 would be fairly rare (about 20% of Secondary's range). If anyone just knows where to find Masa's raw data still it should be easy to see.

Anyway, crit busting might find us a distribution quirk in Secondary:

Simulated crit distribution with 52 base damage at capped ratio with:

floor((52)*range(0,1+[2.25-.45+rand*(.4+.45)],3)*[1+rand*(0.05)])

Versus this data from here:

Code:Melee Crits 149: 1 150: 2 151: 3 153: 2 154: 3 155: 3 156: 32 -- 3.0 157: 23 + 158: 34 ^ 159: 19 160: 31 161: 22 162: 24 163: 19

And I saw Byrthnoth's 1.0 floor distribution in BGBucket already. Because the spike has a width of 1.00 to 1.05, it looks to be 1.0s before Secondary, so it's the same clamping operation as crits applied to hits that range below 1.0 Intermediate pDif while your cRatio is still greater than 1.25 (LIA then changes to 0.25 below 1.25 cRatio to intersect the 1.0 floor and continue minimum damage going lower).

Because as Moten said the frequency of 1.00 to 1.05 hits gets greater (and mind you, much of that range can be floored to a single value, hence why I despise low base damage parses) as cRatio continues below about 1.5 (I would estimate 1+LIA actually) it then shows that LIA continues to stay the same all the way down to 1.25 cRatio and you're getting more an more hits brought up to 1.0. In fewer words this lower clamp being removed coincides with the change to 0.25 LIA below 1.25 cRatio to make the transition between the two LIA values smoother.

Why? Because then it could be found that getting just below 1.25 cRatio gives you a better average damage than anything between 1.25 and maybe 1.45 or so (due to RAE-LIA imbalance going in opposite directions). The floor serves to keep the 'normal' LIA value from kicking a 1.26 cRatio lower in damage than a 1.24 cRatio.

-

2012-01-10 10:36 #31

That was just a simulation, not the result of data. I was just showing that it's possible to raise the frequency of an area without really adding any more equations. The code to generate that 10000 point simulation is like 15 lines long.

-

2012-01-10 11:37 #32Custom Title

- Join Date

- Nov 2008

- Posts

- 1,066

- BG Level

- 6

- FFXI Server

- Diabolos

I need to make breakfast before I chew on the weird part. If it's true that the spike persists even below 1.25 cRatio then my assertion about LIA changing is wrong.

-

2012-01-10 11:38 #33

It does. If you look at Masa's distribution study he has it highlighted in red in the raw data.

-

2012-01-10 12:03 #34Chram

- Join Date

- Sep 2007

- Posts

- 2,526

- BG Level

- 7

- FFXI Server

- Fenrir

Plus the warp cudgel sample I posted above has a 1.03 cRatio.

-

2012-01-10 12:29 #35Hydra

- Join Date

- Feb 2009

- Posts

- 123

- BG Level

- 3

- FFXI Server

- Bahamut

In both crit and none crit there is at least one large bump where a large portion of the data falls around. I have an old greater colibri merit parse with over 1k hits, I hit for 56 damage 130 times, 57 damage 146 times, and 58 damage 136 times. The rest of the numbers doing damage between 30 and 91 none of them were above 27 times. When looking at this old parse it's quite interesting and shows that there is weighting around certain numbers. I will have to go back and look at more.

-

2012-01-10 12:57 #36Custom Title

- Join Date

- Nov 2008

- Posts

- 1,066

- BG Level

- 6

- FFXI Server

- Diabolos

Yeah, the spike is pretty even across both 23 and 24, 1.0 and 1.043. Certainly seems to be modified before Secondary. Having more 24s than 23s can be attributed to the floored normal (>1.0) hits up to about 1.089 Final pDif being added to it, while a natural 23 is rarer, just like the spike observed on crits.

Need to know what happens as 1.00 ratio is crossed though. Right now here's my consideations:

1. Min Crit pDif still follows LIA being 0.45

2. Hits are definitely being set to 1.0.

Potentially below 1.25 cRatio it changes to any hit below cRatio-0.25 being set to 1.0. This would take roughly the bottom quarter of the distribution and set them to 1.0. More precisely a RAE of 0.3 to keep with the pattern and 0.45 LIA gives that bottom 0.2 as being 26.6% of hits. Moten had 378 hits, 160 of them 23 or 24 for 42% of the total. Even arbitrarily shaving off 20 of the '24' hits and 15 of the '23' hits as being >1.0 hits floored it doesn't reach below being 33% of total.

Edit: Taking the range of cRatio to cRatio-0.25 and setting those to 1.0, then adding 0.25 to values below cRatio-0.25 appears to work until I see that he had a ton of 17 damage hits. Those 17 damage hits suggest a 0.55 LIA to me, just estimating. This now gives a 0.85 range that 0.25 is still almost 30% of, so this method may remain viable. This 0.55 LIA change gets defeated by min crits though, so the system might not be applied to crits but this also suggests that LIA remains 0.45.

Someone said the spike appeared in crit data, or was it just a 'bump'? If it's the same thing being applied to min crits there needs to be a corresponding spike at 2.0 crits.

-

2012-01-10 13:45 #37Chram

- Join Date

- Sep 2007

- Posts

- 2,526

- BG Level

- 7

- FFXI Server

- Fenrir

I should be able to get data a bit below 1.0 My sam is still level 95, and with an E club rating that means I would have 280 skill. I could probly get down to about 315 attack for 0.96 to 0.98 cRatio. Can at least see if there's any immediate change under 1.0.

-

2012-01-10 16:10 #38Custom Title

- Join Date

- Nov 2008

- Posts

- 1,066

- BG Level

- 6

- FFXI Server

- Diabolos

Okay, so the disparity is that 40% of total hits need to be made into 1.0's or just above 1.0, but there is only a 0.15 'bonus' on cRatio-LIA giving his 17 final damage.

His max damage indicates a 0.3 RAE, which fits the pattern I predicted in OP.

His 'un-spiked' average is below 1.0306+0.3(RAE)-0.45(LIA), suggesting that RAE-LIA is being modified to about -0.2. A 0.05 change in average from what is expected of his RAE and LIA values derived from min and max.

The 'floor' is presumed to begin at 1.5 cRatio, but LIA of 0.45 would suggest 1.45 instead. Masa has no data in this range. This 1.0 minimum then 'breaks' distinctly and harshly below 1.25 cRatio.

So it may be that 1.25-0.45=0.8. Values between 0.8 and 1.0 are set to 1.0, values below 0.8 are given a small bonus. 17 damage with 23 base suggests a 0.15 bonus, 0.2 is too large to give such a large count of 17s (comes out to 17.92 or so.)

An exact intersection at 1.25 is not necessary, since Masa's graph suggests a 'crash' in minimum just below 1.25 that may be more related to flooring but may instead be that 'harshness' of LIA kicking back in being offset by the bonus I'm suggesting not fully counteracting it.

So with a 0.15 bonus below 0.8 Intermediate pDif, and values between 0.8 and 1.0 being set to 1.0, I'll try Masa's 1.249 cRatio data.

1.249-0.45 = 0.799

(0.799+0.15)*43 = 40.8, which after Secondary becomes 41 or 42 damage. It would have been pure blind luck to have hit this value though, but it does appear to be oddly low in his graph.

1.229 cRatio gives 40.8, 41 or 42 after secondary, as well because of the base damage increase, but he only got a 43 damage hit in a slightly smaller sample size.

1.209 cRatio gives 39.996, 40 or 41 after secondary, and he only hit 42. Again shorter data though.

Skipping along, there's a larger sample size for 1.178 which gives 37.754 intermediate, 38 or 39 secondary. He got 39, so this is looking pretty solid.

Skipping again to his last point with over 1200 samples, 1.054 gives 32.422 intermediate, and 32 33 or 34 within secondary. He got 33, and these 43 base damage samples seem to be reaching these lower values easier probably due to some flooring arrangement.

So this seems very viable:

After Primary, if Intermediate pDif is less than 1.0 but greater than 0.8, set it to 1.0.

If it is less than 0.8, add 0.15.

So working with Moten's data a bit and then losing my edit to hitting the back button, I'm gonna blow through this again.

RAE = 0.3

LIA = 0.45

Forced 1.0 hits: 0.2/(RAE+LIA)

Normal 1.0 hits: (25/23-1.0306)/(RAE+LIA)

Sub 1.0 hits: (0.8-(1.0306-0.45))/(RAE+LIA)

Simulated:

Forced 1.0: 26.66%

Normal 1.0: 7.51%

Spike hits: 34.17%

Sub 1.0: 29.25%

Moten's data: 378 hits

Spike hits: 160/364 42.3%

Sub 1.0: 121/378 32%

Moten's number of spike hits initally suggests against from raising the 'critical floor' from 0.8 to 0.85 (just to make room for Masa's 1.249 data), but this would allow some natural high 0.9 range hits to be pushed into the 1.0 range by secondary, and that gets hard to predict.

Edit edit: Yes, raising the critical floor to 0.85 makes room for Masa's 1.249 result and makes there be no 'gap' below 1.0 pDif. This doesn't affect too much the number of spike hits gained from the now smaller 'force 1.0' range because you'll have natural 0.80-0.85 hits getting +0.15 and pushed up by Secondary into the same range while opening up the sub-1.0 range to about 35.9%, which is no further from Moten's parsed data than before and the 29.25% figure is still guaranteed to be below 1.0 while potentially half or more the remaining 6.65% can be added to 1.0 spike hits due to Secondary.

So in conclusion:

There is a 'soft floor' of 1.0 as long as Intermediate pDif remains above 0.85.

There is a 'critical floor' of 0.85 where Intermediate pDif below this get only a +0.15 bonus.

This applies below 1.3 cRatio, where 1.3-LIA=0.85

If anyone can confirm that low cRatio crit distributions have the spike, this should apply perfectly to them too with the Critical Floor considered before adding 1.0. If there is no spike in crit data, then it is considered after 1.0 is added and irrelevant.

-

2012-01-11 04:42 #39MasamuneGuest

after reading in diagonal this thread, i'm agreed with this:

after trying multiple formulations with 1, 2 or even 3 randomizers, closest and simplest excel showed me when comparing with my parses distributions were something similar to Darkhorror's simplification of yur formula, except i never managed to pinpoint an acceptable value for the parameters you call RIA and LIA matching ALL my observed maxs and mins at ANY cRatio (allowing even a 1dmg discrepancy). Originally Posted by Raellia

Originally Posted by Raellia

But before dropping this madness, i kept notes about something that might help you guys:

- use highest base dmg weapon

- try to get as much crit% as possible (and also multihits) to make the parseing less long...

- avoid any additional JA or buff that could add another term into the formula

That 3rd note might actually be a precious help in rejecting a formula/parameter's value over another :

Adding the piercing bonus vs the colibris i were testing showed the damage formula NOT generating any dmg value ending with a "4" or "7", even after 3k hits, showing regularly holes in the distributions. In comparison, all my other parses generated any single possible values within the [MIN;MAX] range.

That means if your proposed formula can generate such value => that's too bad, go find something else :/

-

2012-01-11 13:51 #40Custom Title

- Join Date

- Nov 2008

- Posts

- 1,066

- BG Level

- 6

- FFXI Server

- Diabolos

Nope, this one is probably quite easy. Piercing bonus can be simply applied after final damage is floored, and then re-floored. Are you quite sure you're not mis-remembering and it was instead 4s and 9s that were missing though? Because that's what happens with flooring before and after a 1.25 factor.

Type weaknesses being outside Secondary also explains how they are easily found by regular people instead of getting fuddled to something like 25% looking like 25.6% by Secondary.

Edit: Yup, that was easy. Took Joyeuse out on some birds PLD/DNC. Plenty of '7's but not a single hit ending '4' or '9', including crits.

It's rather short data, but at least points out that you're probably misstating, and flooring before and after a 1.25 factor explains it perfectly.

Spoiler: show

XI Wiki

XI Wiki

Reply With Quote

Reply With Quote